The retail price of the NVIDIA H100 held remarkably steady between $25,000 and $40,000 from mid-2024 into early 2026, yet the secondary market revealed a far more volatile reality. During the peak of global scarcity in mid-2024, used and refurbished H100s frequently traded as high as $50,000 per unit—a premium of at least 25% over official MSRP. As supply chains normalized and newer architectures emerged, these prices dropped sharply, proving that for enterprise AI, the sticker price was rarely the true market clearing price. Instead, the secondary market emerged as the primary barometer for the industry’s actual supply-and-demand dynamics.

This parallel market for used and refurbished data center GPUs has quietly matured into a foundational layer of global AI infrastructure. It serves three critical functions: lowering the capital expenditure (CapEx) barrier for "neocloud" operators building competitive GPU capacity, providing essential capital recovery for hyperscalers rotating through hardware generations, and establishing pricing signals that dictate how the industry approaches depreciation and hardware lifecycle planning. Despite its scale, the B2B secondary market remains under-covered by mainstream technology media, which continues to focus on consumer-level resale of gaming-grade hardware. In reality, the professional secondary market for enterprise GPUs like the A100 and H100 is a sophisticated ecosystem of IT asset disposition (ITAD) vendors, specialized resellers, and institutional buyers.

The Architecture of the B2B Secondary Market

The secondary GPU market is a business-to-business (B2B) ecosystem focused on the acquisition, refurbishment, and resale of high-performance data center GPUs and the server systems that house them. This market is distinct from the consumer secondhand market; it deals in massive contracts, certified data sanitization, and rigorous hardware testing.

The supply side of this market is dominated by hyperscale cloud providers—Amazon Web Services (AWS), Google Cloud, and Microsoft Azure—who are constantly rotating their fleets to stay at the frontier of performance. When these giants deploy Blackwell-generation hardware, the Hopper (H100) and Ampere (A100) systems they replace enter the secondary market through ITAD vendors. Additional supply stems from enterprises consolidating AI projects and former cryptocurrency miners who repurposed their infrastructure for AI workloads but are now seeking to exit or upgrade.

On the demand side, the market is led by neocloud operators. These providers, such as Lambda Labs and CoreWeave, often build capacity using a hybrid of new and used hardware to offer lower rental rates than hyperscalers. Approximately one-third of global AI workloads now run on neoclouds, making them the primary engine of demand for refurbished enterprise chips. Smaller research institutions, budget-conscious enterprises, and companies in regions with restricted access to new NVIDIA silicon also rely on these channels to secure compute power.

Intermediaries play a vital role in ensuring liquidity and trust. Firms like Procurri and Bitpro specialize in the logistics of acquiring and testing hardware from hyperscalers. Enterprise resellers such as Alta Technologies and NewServerLife provide multi-point inspections and warranties on refurbished units, bridging the gap between "used" and "enterprise-ready." The distinction is financially significant: refurbished H100s often command a 15% to 25% premium over "as-is" used units because they mitigate the operational risk for the buyer.

Comparative Pricing and the Generation Gap

As of early 2026, the secondary market shows a clear bifurcation between the aging but liquid A100 series and the more volatile H100 series. While public listings often reflect reseller "asks," private bulk transactions frequently clear at lower rates, creating a complex pricing environment.

The NVIDIA A100: The Secondary Workhorse

Released in May 2020, the A100 remains the most actively traded enterprise GPU. Refurbished A100 40GB units are currently listed at approximately $7,800, while the 80GB PCIe variants trade for roughly $18,900. The market for A100s is characterized by steady turnover rather than a "flood" of inventory. As more enterprises move toward Blackwell and Rubin architectures, A100 prices are projected to decline by another 10% to 15% through the end of 2026. For many organizations, the A100 remains the most economical choice for LoRA (Low-Rank Adaptation) fine-tuning of 7B to 13B parameter models and inference on quantized models.

The NVIDIA H100: Volatility and Market Resets

The H100 has experienced the most dramatic price swings in the history of data center silicon. After trading at a massive premium in 2024, the market reset in late 2025 following a reported 44% price cut by AWS on H100 instances. By early 2026, a used 8-GPU H100 server typically trades between $150,000 and $180,000. In contrast, a new B300 server can cost upwards of $500,000. This 40% to 70% savings makes refurbished H100 systems highly attractive for providers operating on a GPU-as-a-Service (GPUaaS) model.

However, the savings on the GPU itself can be offset by rising component costs. From late 2025 into 2026, the prices for DDR5 memory and server-grade SSDs rose sharply due to AI-driven supply strains. Analysts suggest that if a used server requires a full memory and storage refresh, the economic advantage of the secondary market can be eroded by as much as 20%.

The Great Depreciation Debate: 3 Years or 9 Years?

The most contentious question in AI infrastructure today is the actual economic lifespan of a data center GPU. The answer dictates whether hyperscalers are overstating their earnings and whether secondary market buyers are making a sound investment.

Between 2020 and 2024, the industry standard for server depreciation shifted from 3–4 years to 5–6 years. However, in early 2025, this consensus fractured. Amazon shortened the useful life of some servers back to 5 years, citing the rapid pace of AI development—a move that resulted in a $677 million reduction in net income for the first nine months of 2025. Conversely, Meta extended its schedule to 5.5 years, booking a $2.9 billion depreciation reduction.

Investor Michael Burry has famously challenged these long schedules, arguing that NVIDIA’s annual chip release cycle renders hardware economically obsolete in just 2 to 3 years. Burry estimates that the industry may have understated depreciation by as much as $176 billion between 2026 and 2028. His logic is based on performance leaps; for instance, the Blackwell architecture offers up to 25 times the energy efficiency of Hopper for specific inference tasks.

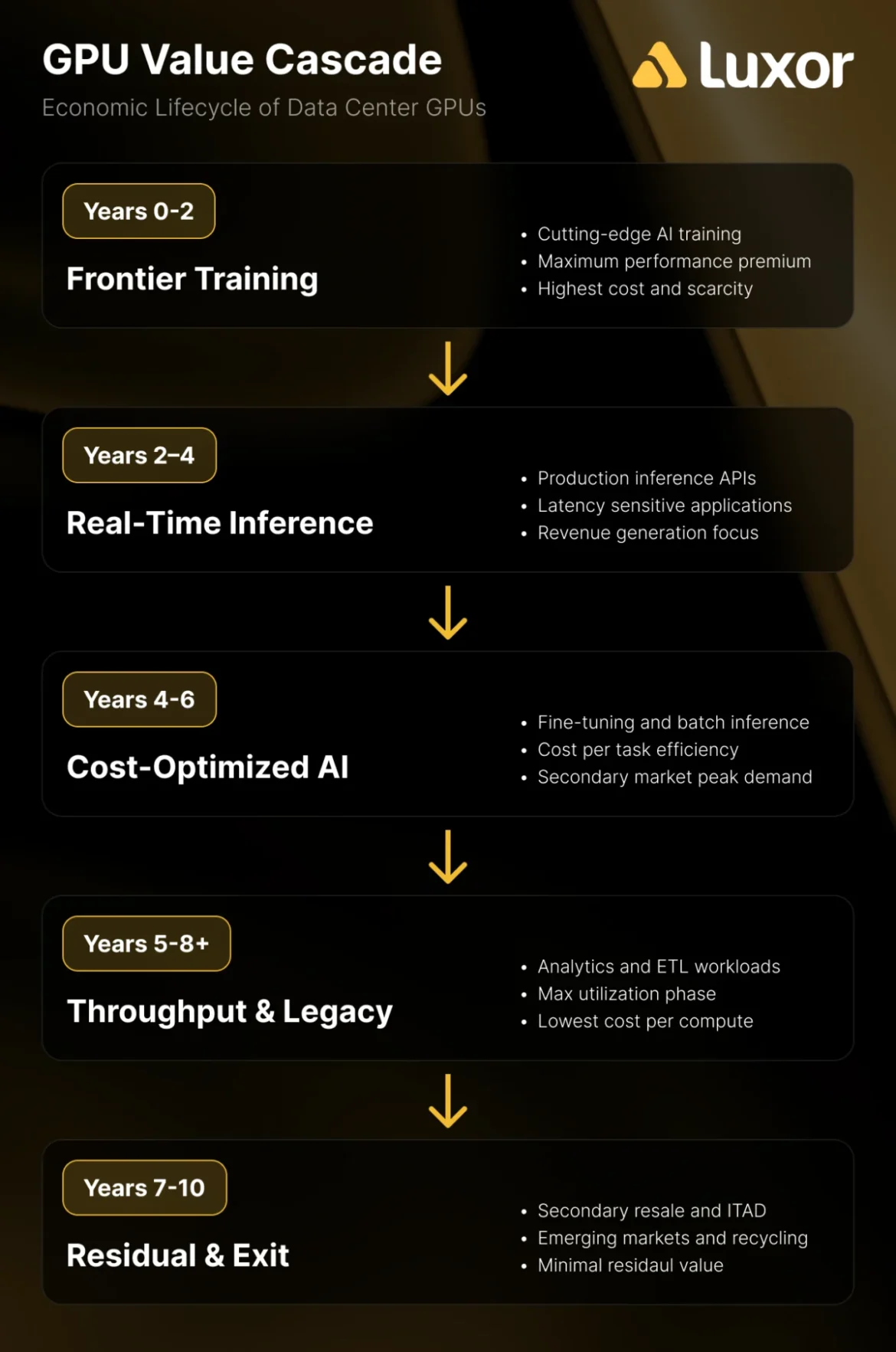

The counter-argument, often called the "Value Cascade," suggests that GPUs follow a five-phase lifecycle:

- Frontier Training (Years 0–2): Leading-edge models are trained on the newest hardware.

- Real-Time Inference (Years 2–4): Hardware moves to high-speed inference tasks.

- Cost-Optimized AI (Years 4–6): Hardware is used for smaller models and fine-tuning.

- Throughput & Legacy (Years 6–8): Batch processing and non-critical workloads.

- Residual Value & ITAD (Years 8–10): Final resale and recycling.

Historical data supports this longer view. Microsoft Azure only retired its K80 and P100 VMs in late 2023—GPUs that were launched between 2014 and 2016, implying a service life of 7 to 9 years. Similarly, the NVIDIA T4, released in 2018, continues to generate consistent rental revenue in 2026. CoreWeave CEO Michael Intrator has noted that the industry is moving toward "heterogeneous fleets," where older GPUs handle routine inference while the leading edge is reserved for frontier training.

Strategic Implications and Future Risks

The secondary GPU market is no longer a niche for bargain hunters; it is a structural necessity. For many neoclouds, the ability to source used H100s at $150,000 per server instead of new B300s at $500,000 is the difference between a viable business model and insolvency. Furthermore, as OpenAI’s Sachin Katti has noted, "shifting workload economics necessitate heterogeneous fleets." The industry is learning that the most expensive GPU is not the one with the highest price tag, but the one deployed against a workload that doesn’t require its specific capabilities.

However, several risks loom over this market:

- Accelerated Release Cycles: If NVIDIA maintains a strict annual release cadence (Blackwell in 2024, Rubin in 2026, Rubin Ultra in 2027), the "frontier" window for any GPU will shrink, potentially causing a glut of mid-generation hardware that crashes secondary prices.

- Custom ASICs: Hyperscalers are increasingly deploying custom silicon like AWS Inferentia and Google TPUs. While these have not yet disrupted the general-purpose GPU market, they represent a long-term threat to the "Value Cascade" by providing cheaper alternatives for inference.

- Tariffs and Trade Policy: In 2025, new tariff policies created upward pricing pressure on supply chains. Estimates suggest that tariffs could add 20% to 40% to the cost of importing certain server components, narrowing the price gap between new and refurbished systems.

The secondary GPU market has moved beyond its "Wild West" phase into a mature, essential component of the global AI economy. While the debate over hardware longevity remains unresolved, the market data suggests that legacy GPUs retain meaningful economic value far longer than skeptics believe. As the industry shifts from a frantic "get compute at any cost" mentality to a more disciplined "cost-per-task" approach, the secondary market will only grow in importance, acting as the ultimate recycler of capital and compute in the AI era.